Machine learning models have revolutionized various industries by enabling advanced data analysis and predictive capabilities. However, optimizing these models is crucial to enhance their performance and efficiency. In this article, we will explore a range of techniques for optimizing machine learning models, ensuring they deliver accurate results and operate efficiently. Techniques for Optimizing Machine Learning Models.

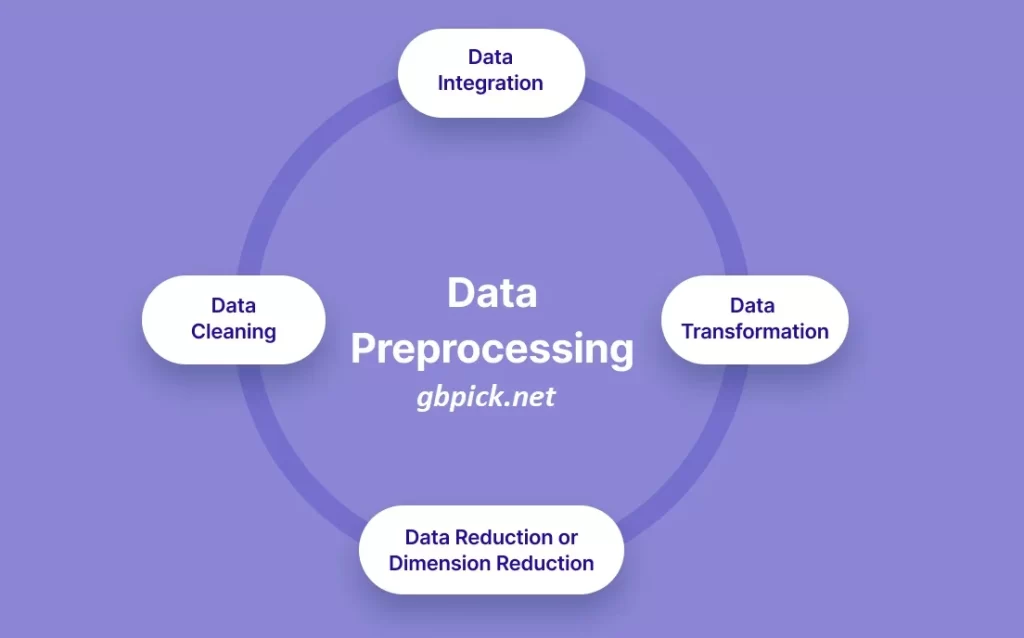

Data Preprocessing

Data preprocessing plays a vital role in optimizing machine learning models. This step involves cleaning and transforming raw data to ensure its quality and compatibility with the chosen algorithms. Techniques such as handling missing values, dealing with outliers, and normalizing or standardizing features can significantly impact the model’s performance. By addressing these preprocessing tasks, we can reduce noise, improve data consistency, and enhance the model’s ability to uncover meaningful patterns.

Feature Selection and Engineering

Feature selection and engineering are essential techniques for optimizing machine learning models. Feature selection involves identifying the most relevant and informative features that contribute to the model’s predictive power. Techniques for Optimizing Machine Learning Models. On the other hand, feature engineering focuses on creating new features or transforming existing ones to extract more meaningful representations.

Model Selection

Choosing the right model architecture is crucial for optimization. Different machine learning algorithms have distinct strengths and weaknesses. Understanding the characteristics of various models, such as decision trees, support vector machines, or neural networks, enables us to select the most suitable one for the given task. Considerations like the size and nature of the dataset, computational requirements, interpretability, and the desired level of accuracy influence the model selection process. Techniques for Optimizing Machine Learning Models.

Hyperparameter Tuning

Hyperparameters are parameters set before training the model that control its learning process. Optimizing these hyperparameters can significantly impact a model’s performance. Techniques like grid search, random search, or Bayesian optimization enable us to systematically explore different hyperparameter combinations and identify the optimal configuration. Fine-tuning hyperparameters such as learning rate, regularization strength, or network architecture hyperparameters can lead to substantial improvements in model accuracy and generalization.

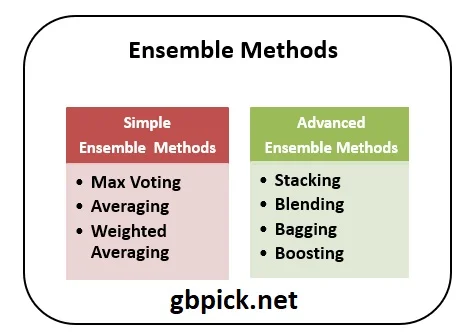

Ensemble Methods

Ensemble methods combine multiple machine learning models to make predictions, leveraging the wisdom of the crowd. Techniques such as bagging, boosting, and stacking can enhance model performance and reduce overfitting. Bagging methods like Random Forest create an ensemble of decision trees, while boosting methods like AdaBoost or Gradient Boosting build models sequentially, focusing on samples that were misclassified previously. Stacking combines predictions from multiple models using another model as a meta-learner. These techniques improve the robustness and accuracy of machine learning models.

Conclusion

Optimizing machine learning models is essential for achieving accurate predictions and efficient performance. By employing techniques like data preprocessing, feature selection and engineering, model selection, hyperparameter tuning, and ensemble methods, we can enhance the model’s predictive power, reduce overfitting, and improve overall performance. Remember that the choice of optimization techniques may vary depending on the specific problem and dataset. Embracing these techniques and experimenting with different strategies will lead to more effective and impactful machine learning models.